Welcome to DU!

The truly grassroots left-of-center political community where regular people, not algorithms, drive the discussions and set the standards.

Join the community:

Create a free account

Support DU (and get rid of ads!):

Become a Star Member

Latest Breaking News

Editorials & Other Articles

General Discussion

The DU Lounge

All Forums

Issue Forums

Culture Forums

Alliance Forums

Region Forums

Support Forums

Help & Search

General Discussion

Showing Original Post only (View all)The Deepfake Nudes Crisis in Schools Is Much Worse Than You Thought [View all]

Last edited Thu Apr 16, 2026, 10:08 AM - Edit history (1)

Wired

https://www.wired.com/story/deepfake-nudify-schools-global-crisis/

I forgot to clear cookies, so I exceeded the free article limit.

https://archive.ph/ZSW1a

An analysis by WIRED and Indicator found nearly 90 schools and 600 students around the world impacted by AI-generated deepfake nude images—and the problem shows no signs of going away.

The true scale of deepfake sexual abuse taking place in schools is likely much higher.

Lonnnnng Hacker News discussion;

https://news.ycombinator.com/item?id=47779856

Around the world, teenage boys are saving Instagram and Snapchat images of girls they know from school and using harmful “nudify” apps to create fake nude photos or videos of them. These deepfakes can quickly be shared across whole schools, leaving victims feeling humiliated, violated, hopeless, and scared the images will haunt them forever.

The deepfake crisis hitting schools started slowly a couple of years ago, but it has since grown considerably as the technology used to create the explicit imagery has become more accessible. Deepfake sexual abuse incidents have hit around 90 schools globally and have impacted more than 600 pupils, according to a review of publicly reported incidents by WIRED and Indicator, a publication focusing on digital deception and misinformation.

The findings show that since 2023, schoolchildren—most often boys in high schools—in at least 28 countries have been accused of using generative AI to target their classmates with sexualized deepfakes. The explicit imagery, containing minors, is considered to be child sexual abuse material (CSAM). This analysis is believed to be the first to review real-world cases of AI deepfake abuse taking place at schools globally.

As a whole, the analysis shows the worldwide reach of harmful AI nudification technology, which can earn their creators millions of dollars per year, and shows that in many incidents, schools and law enforcement officials are often not prepared to respond to the serious sexual abuse incidents.

The deepfake crisis hitting schools started slowly a couple of years ago, but it has since grown considerably as the technology used to create the explicit imagery has become more accessible. Deepfake sexual abuse incidents have hit around 90 schools globally and have impacted more than 600 pupils, according to a review of publicly reported incidents by WIRED and Indicator, a publication focusing on digital deception and misinformation.

The findings show that since 2023, schoolchildren—most often boys in high schools—in at least 28 countries have been accused of using generative AI to target their classmates with sexualized deepfakes. The explicit imagery, containing minors, is considered to be child sexual abuse material (CSAM). This analysis is believed to be the first to review real-world cases of AI deepfake abuse taking place at schools globally.

As a whole, the analysis shows the worldwide reach of harmful AI nudification technology, which can earn their creators millions of dollars per year, and shows that in many incidents, schools and law enforcement officials are often not prepared to respond to the serious sexual abuse incidents.

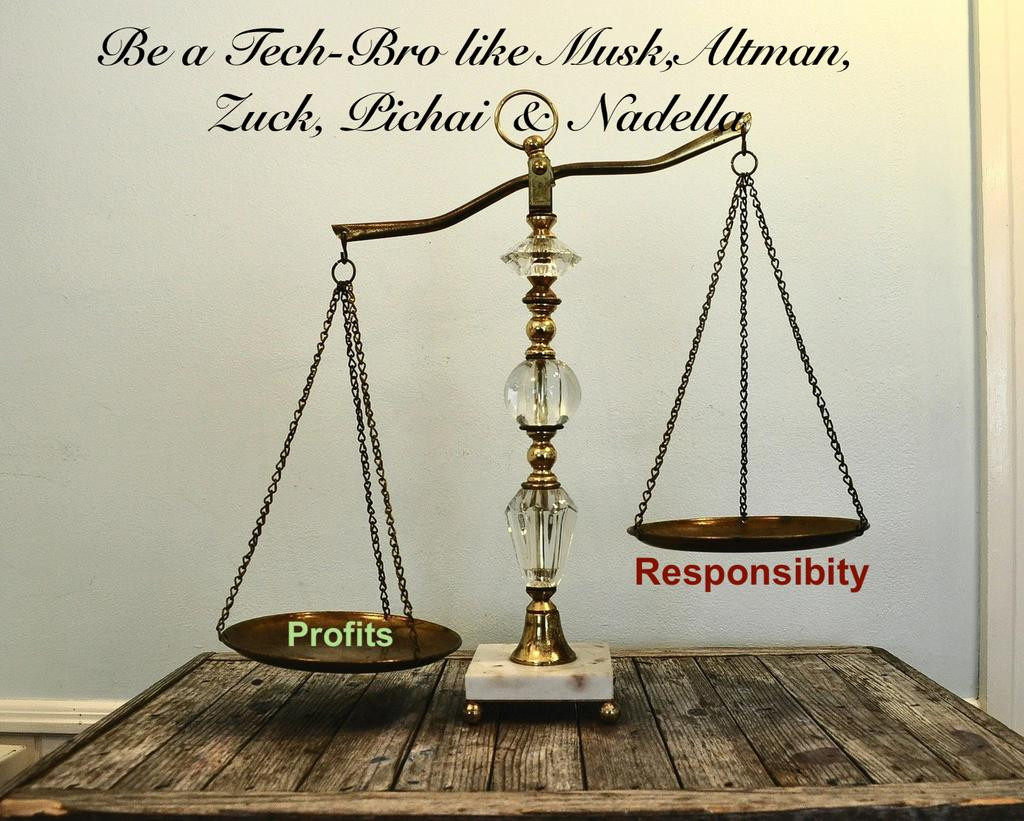

Ruining schoolkids' lives? WE DON'T GIVE A FLYING FU*K

And thanks for all the money, assholes.

Update.

Apple Threatened to Pull Grok From App Store Over Sexualized Images

Earlier this year, Grok's AI capabilities came under scrutiny after X users shared nonconsensual sexualized images of women and children created by the app, many of which were based on photos of real people.

What followed was a confusing rollout of moderation changes to Grok, some of which could be easily bypassed. Publicly, Apple did not comment on the controversy at the time, but it did respond, and was in fact the instigator of the changes. Internally, the company had found both X and Grok in violation of its App Store guidelines and demanded its developers submit a content moderation plan, the letter reveals.

According to the letter, Apple rejected an initial fix from xAI as insufficient, saying the "changes didn't go far enough," and Apple warned it that additional alterations were required or Grok would be removed. After further back-and-forth, however, Apple eventually concluded that a later submission of the app had improved enough for it to be approved.

snip

Lying sicko X (Musk) said:

What followed was a confusing rollout of moderation changes to Grok, some of which could be easily bypassed. Publicly, Apple did not comment on the controversy at the time, but it did respond, and was in fact the instigator of the changes. Internally, the company had found both X and Grok in violation of its App Store guidelines and demanded its developers submit a content moderation plan, the letter reveals.

According to the letter, Apple rejected an initial fix from xAI as insufficient, saying the "changes didn't go far enough," and Apple warned it that additional alterations were required or Grok would be removed. After further back-and-forth, however, Apple eventually concluded that a later submission of the app had improved enough for it to be approved.

snip

Lying sicko X (Musk) said:

"We strictly prohibit users from generating non-consensual explicit deepfakes and from using our tools to undress real people. xAI has extensive safeguards in place to prevent such misuse, such as continuous monitoring of public usage, analysis of evasion attempts in real time, frequent model updates, prompt filters, and additional safeguards."

Safeguards are easily bypassed with creative prompts.

Source: https://www.macrumors.com/2026/04/15/apple-threatened-pull-grok-from-app-store/

The original story at NBC is paywalled.

MONEY MONEY MONEY.

10 replies

= new reply since forum marked as read

Highlight:

NoneDon't highlight anything

5 newestHighlight 5 most recent replies

= new reply since forum marked as read

Highlight:

NoneDon't highlight anything

5 newestHighlight 5 most recent replies